Latest news about Bitcoin and all cryptocurrencies. Your daily crypto news habit.

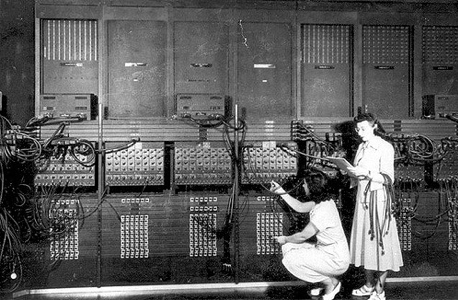

…there were no programmers, because the computers were the program. They did not really resemble modern computers in any way. They weighed tons and took up whole walls in rooms with custom-built air conditioning and ventilation for their relays and tubes, not to mention specially reinforced floors.

Long before I learned how to program; in fact, quite a long time before I was born, the first computers were “programmed” by flipping electro-mechanical switches and plugging in wires on a patch panel.

The people (wearing lab coats) who operated these computers didn’t even think of the plugging and switch flipping as programming. The program was designed by electrical engineers and built into the wiring of these incredibly expensive giant electric calculators. What these people were doing was entering the problem to be calculated.

The photo below is often mis-labeled Programming the ENAIC computer. The fact is that like all other computers of its time, ENIAC was not programmable. What made it special however, was that instead of having just one program hardwired into it, it had many. Each one of the panels you see in the photo below was one hardwired program. Using the patch cables computer operators such as those in the photo could enter data into the panel that contained the hardware “program” to be run, and configure the panels together to run one program/panel from the results of another.

I do not mean to trivialize the work. It required a great deal of creativity and expertise, and was ground breaking in its own right. However, it simply was not programming as most people understand the term.

Computer operators configuring ENIAC

Computer operators configuring ENIAC

Although Konrad Zuse[1] is now recognized as having invented the modern computer and the first programming language in Nazi Germany, his efforts remained top secret for a long time. The thing that really launched software programming as we know it today else was the publication of the First Draft of a Report on the EDVAC[2] in 1945, written (or perhaps more accurately: assembled and edited) at the request of the U.S. Army by John Von Neumann, a remarkable fellow, with too many achievements to list here. The paper described the basis for modern computers as we know them; very fast binary calculators that store programs in machine language and execute them. The really innovative part was that the computer he described wasn’t hardwired for any programs. Programs had to be written using opcodes (which I will describe below). Circulation of this report is undeniably the catalyst for modern computer design.

In 1949 EDVAC ran its first program, read from a gigantic roll of magnetic tape. The tape spools were so massive that women like my mother were discouraged from entering the field for fear it was not possible for a woman to physically transport them to and from the computer.

It was 1949:

- Harry Truman became president and introduced his Fair Deal

- It was the first year in which no African-American was reported lynched in the United States of America

- Canadians and Australians broke free of the commonwealth and created their own citizenships

- Mao and the People’s Republic of China took power

- Israel held its first election and joined the UN

- The German Democratic Republic was established

- NATO was formed

- Winston Churchill proposed the European Union

- The first non-stop around-the-world airplane flight happened

- The first jet powered airplane flew

- The Soviet Union tested its first atomic bomb…

And programming was born.

“Where a calculator on the ENIAC is equipped with 18,000 vacuum tubes and weighs 30 tons, computers in the future may have only 1,000 vacuum tubes and weigh only 1(and)1/2 tons.”~ Popular Mechanics, March 1949Then there was the word

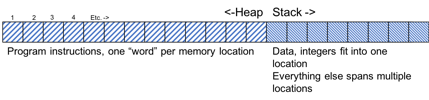

“Von Neumann” computers, as modern computers are sometimes called, all operate on some basic shared principles. They have memory divided into two “spaces” called the stack and the heap. The heap is used to store the running program, and the stack is used to store the data that is the input and output. As you will see later, some clever programmers play tricks, hiding data within code, or code within data.

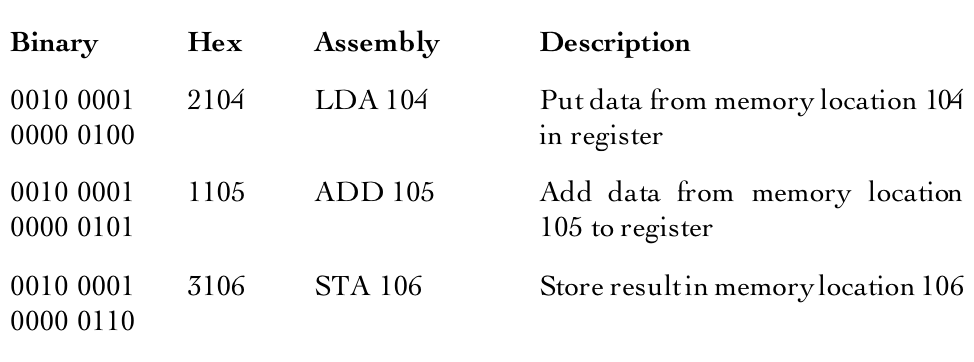

The binary[3] program is stored in the heap as a series of hexadecimal words[4]. Words are different lengths on different CPUs. Very old CPUs used 8-bit words, modern CPUs use 64-bit words. Each word triggers a specific CPU operation. For this reason, they are called opcodes. For example, on an old 16-bit Intel CPU, the hexadecimal number “2104” will store the number from stack location 104 in the CPU in a special memory scratchpad called a register. “1105” will add the number from stack location 105 to the first number and “3106” will take the result and store it in stack location 106. All computer programs eventually come down to a series of words stored in memory.

In assembly language, a three-letter mnemonic represents the binary or hexadecimal machine instruction. For almost a decade, programmers used assembly language to locate and retrieve data in memory and move it to the Central Processing Unit (CPU) for computation. The step by step instructions do not really resemble normal human logic in any way.

Below is a very simple program that adds two numbers. I show the binary, the hex, and the assembly instructions side by side (the data is not shown).

Here is a simple assembly language program that prints out the phrase “Hello World”. The first words on lines 5–9 are examples of opcodes (there are other opcodes in this program that are not as easily pointed out).

1 section .text2 global _start3 _start:4 ; write our string to stdout.5 mov edx,len ; third arg: message length6 mov ecx,msg ; second arg: pointer to msg7 mov ebx,1 ; first arg: file handle (stdout)8 mov eax,4 ; system call number (sys_write)9 int 0x80 ; call kernel10 ; and exit.11 ebx,0 ; first syscall argument: exit code.12 mov eax,1 ; system call number (sys_exit)13 int 0x80 ; call kernel.14 section .data15 msg db “Hello, world!”,0xa ; the string to print.16 len equ $ — msg ; length of the string.

This kind of programming is called bare metal programming[5]. It is terse and difficult to understand. In those early days, computer programs were small because the capacity of computers was small.

The cellphone that you likely have in your pocket has 30 million times more storage and is about 10 million times faster than an IBM 650 was in 1954. The smallest program on your cell phone would not fit on 10,000 IBM 650s wired together. The phone hardware is also about 40,000 times smaller and lighter, uses about 400,000 times less power, and is 10,000 times less expensive. The IBM 650 cost half a million dollars in 1954 which is equal to about 4.6M today.

For the rest of this series, I will describe the technological advances that allowed programmers to evolve ever larger and increasingly more complex programs, starting with the first programming languages.

Speaking in tongues

In 1957, FORTRAN (FORmula TRANslation) made it possible for people to write programs by composing with human logic instead of needing to remember difficult opcodes.

The way human readable (and relatable) programming languages work is by taking a concept, such as printing out something, which as we saw just above takes a dozen lines of assembly code, and abstracting [6] that concept into one reserved word. Reserved words are not the same as binary or hexadecimal words. Reserved words are human language code words that represent whole sentences in computer words. In the case of FORTRAN the reserved word that replaces most of the assembly program above is “write”.

A special program called a compiler expands “write” into the sixteen lines of assembly language, and other reserved words into their respective assembly language equivalents.

As we shall soon see, many early programmers didn’t like compilers. They did not trust them to do as good a job as a human. In the very beginning there may well have been merit to that, but over the years, humans transferred a lot more of their “smarts” into compilers. Also, as computer capacity got larger, the programs got a lot larger and more complex. It would be almost impossible for a modern programmer to beat a compiler, and nobody really tries.

But in the old days, even when using a compiler programmers were still “close” to the metal. They usually knew what the compiler was doing, what “choices” it would make when it “saw” certain patterns in the human readable language. Good programmers would write their human readable code in specific ways meant to force the compiler into “doing the right thing”.

Here is the “Hello World” program in FORTRAN:

1 implicit none2 write ( *, ‘(a)’ ) ‘ Hello, world!’3 stop4 end

As you can see, writing in FORTRAN reduced the number of programming statements required. On the average by a factor of twenty.

The increase in programming productivity was an order-of-magnitude because the longer or more complex a program is, the more significant the productivity gain becomes. The examples I give you are very short. With a larger program (tens or hundreds of thousands of lines) the difference is much more dramatic. Writing five hundred lines instead of ten thousand lines is a lot bigger productivity improvement than writing four lines instead of sixteen.

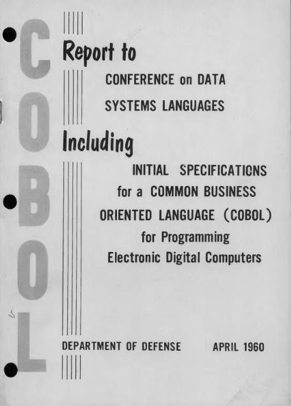

A few years earlier, Grace Hopper had created a language for business use called FLOW-MATIC, based on her belief that people should be able to program a computer using plain English and have the computer convert the English words into machine instructions for itself. Few people have heard of FLOW-MATIC, but many people have heard of COBOL (COmmon Business Oriented Language) which was based in very large part upon FLOW-MATIC and was considered by many to be more human readable than FORTRAN.

1 IDENTIFICATION DIVISION.2 PROGRAM-ID. HELLO-WORLD.3 * simple hello world program4 PROCEDURE DIVISION.5 DISPLAY ‘Hello world!’.6 STOP RUN.

It must be said that COBOL programs are disliked by many programmers for being extremely wordy, which could be considered a productivity decrease.

The MIT Jargon File[7] has this to say:

COBOL fingers /koh’bol fing’grz/ n. Reported from Sweden, a (hypothetical) disease one might get from coding in COBOL. The language requires code verbose beyond all reason (see candygrammar); thus it is alleged that programming too much in COBOL causes one’s fingers to wear down to stubs by the endless typing. “I refuse to type in all that source code again; it would give me COBOL fingers!”

Nevertheless, making programming accessible to a greater number of people constituted a productivity improvement on the whole, and it was still a lot less code than assembly.

In the late fifties two other influential languages came into being at the same time, LISP, which is sometimes still used today for AI (Artificial Intelligence) programming, and ALGOL (ALGOrithmic Language). Just about every language in common use today is a descendant of not FORTRAN or COBOL, but of ALGOL (sometimes with a little LISP influence thrown in for good measure). But those descendants would only start to appear twenty years later.

The next decade belonged to the computer manufacturers and the electrical engineers. They would improve programming productivity not through languages, but through hardware and operating systems.

“Here is a language so far ahead of its time, that it was not only an improvement on its predecessors, but also on nearly all its successors.”[8]~ Sir Charles Antony Richard Hoare Turing award winning computer scientist speaking about ALGOL

[1] For those who are interested, I have included an appendix that contains a list of all the people I mention in the book, along with basic biographical information.

[2] Von Neumann, John. “First Draft Of A Report On The EDVAC”. 1945, Moore School Of Electrical Engineering, University Of Pennsylvania, doi:10.5479/sil.538961.39088011475779.

[3] You can learn about binary on YouTube: https://youtu.be/lsCKJ6se_1w, and about bits and bytes at https://youtu.be/b7pOcU1xMks

[4] Whereas our familiar counting system is base 10 and binary is just base 2, a hexadecimal system is base 16.

[5] http://www.catb.org/jargon/html/B/bare-metal.html

[6] I will provide am in depth explanation of what abstraction means in computing later on, in Chapter Four.

[7] “The Jargon File is a glossary and usage dictionary of computer programmer slang. The original Jargon File was a collection of terms from technical cultures such as the MIT AI Lab, the Stanford AI Lab (SAIL) and others of the old ARPANET AI/LISP/PDP-10 communities, including Bolt, Beranek and Newman, Carnegie Mellon University, and Worcester Polytechnic Institute“. https://en.wikipedia.org/wiki/Jargon_File

The whole text is available at http://catb.org/jargon/html/index.html.

I read it with religious fervor when I was in my larval stage. You can look up larval stage in the Jargon File.

[8] C. A. R. Hoare. 1973. Hints on Programming Language Design. Technical Report. Stanford University, Stanford, CA, USA.

This article is an excerpt from my upcoming book The Chaos Factory which explains why most companies and government can’t write software that “just works”, and how it can be fixed.

The Metal years: 1950–1960 was originally published in Hacker Noon on Medium, where people are continuing the conversation by highlighting and responding to this story.

Disclaimer

The views and opinions expressed in this article are solely those of the authors and do not reflect the views of Bitcoin Insider. Every investment and trading move involves risk - this is especially true for cryptocurrencies given their volatility. We strongly advise our readers to conduct their own research when making a decision.