Latest news about Bitcoin and all cryptocurrencies. Your daily crypto news habit.

If the previous two decades were the dark ages, the 1970’s were the Renaissance; a bridge between the classical era of computing and the modern era of programming. It was a time of great change for Western society in general and the world of programming was no exception. Programming was on the verge of becoming the activity we know today; it was becoming possible to write code without an intimate knowledge of the computer hardware. CRT based VDTs first introduced with IBM’s System 360 and originally costing as much again as the computer itself, became affordable and common. New programming languages changed the way people worked, and by the end of the decade, personal computers became available at reasonable prices.

The 1970’s are also when my narrative starts to become personal. Although I myself did not start to program until the eighties, the programmer culture and history of the seventies was still a very real and contemporary presence for me as I learned the craft. The people I write about, most of whom were heroes to me, were in the prime of their careers when I was just starting mine, and I have personally used most of the systems and languages that I will write about from this point on.

One system that was tremendously important was Unix™. It was created by a team of five programmers at AT&T Bell Laboratories and was portable from one hardware platform to another. Its distribution included the source code for the OS itself. For this reason, Unix™ was “ported” to many different hardware platforms. It was the beginning of operating systems that were independent of specific hardware platforms. It would be difficult to overstate the influence that Unix™ has had on computing in general, but more specifically on the craft¹ of programming.

From the perspective of personal computers (my own perspective), which initially ran crude little operating systems like MS-DOS and CP/M, Unix™ had the aura of “the big leagues”; rich in features, impressively flexible, at times arcane and inscrutable, at others powerful and simple. When I started working in Unix™ I felt I had arrived. To my bosses, I was elite. When GE Information Services was asked by GM’s Electronic Data Systems to setup a Unix™ server farm to control the computerized painting of Camaro automobiles at their Sainte-Thérèse plant, I was the one they sent. I knew Unix™! However, from the perspective of the real big leagues — operating systems for mainframes and minicomputers — nothing could be further from reality.

Unix™ was developed by a team working on a “real” operating system: Multics. In those days “real” operating systems (used by “real” programmers) ran on huge, powerful, extremely expensive computers. One of their jobs was to manage this expensive resource as carefully as possible. Therefore, significant portions of their code were dedicated to complicated user management, with accounts that had quotas or allocations of computer time, and code to remove time from the account as program “jobs” were run. They managed the separation between jobs, the “time-slicing” that optimized the use of the CPU, the communications protocols to terminals and disks and printers, and a long list of other things besides.

The name Unix was a joke, a play on words. Designed as a playground for developers, it intentionally lacked all the complicated controls of Multics, and so it was Un-Multics, it was Uni-user because it lacked what were considered standard multi-user features. But at the same time, the OS itself was a very serious endeavor. It was meant to be a productive platform for programmers to develop software and it had a single unifying philosophy: “the idea that the power of a system comes more from the relationships among programs than from the programs themselves”.² It had architectural coherence: terminals, printers, and even disks and network adaptors were all treated the same way through a single simple communications model.

And it was programmable.

When one purchased Unix™ from AT&T (for the princely sum of twenty-thousand dollars) it came with the source code. The implications of that were staggering for the time. Up until this time, operating systems were almost exclusively created by the computer hardware manufacturers for a specific computer or series of computers and were jealously guarded trade secrets. It was not only impossible — it was unthinkable — to modify the OS in any way. By the unorthodox inclusion of the source code, AT&T made it possible for any licensee to do two things that were completely novel: they could modify it, and they could compile it and run it on any computer that had a C compiler (which very quickly became quite a few), and that meant that a programmer could replace the original operating system that came with the computer!

Not that AT&T was completely free from the closely guarded trade secret mentality. Every time I have written Unix above I included a trademark symbol next to it. This is an in-joke for any reader who happened to have been a programmer in the 70’s and 80’s. AT&T was infamous for its fanatical pursuit of trademark infringement. Usenet lore had it that even a casual mention of Unix without the ™ would bring down a storm of corporate legal aggression so fearsome that it could take years off of one’s life. As such, many of us carefully, and very ironically, inserted (tm) after every mention of the name. I hope I gave a smile to at least one or two readers who remember those days.

So how did Unix help improve productivity in programming? Because of its philosophy of placing a premium on interactions between programs, Unix dictated a standard simple protocol by which programs would exchange data with other programs and with the computer and all its peripherals: text.

This sounds deceptively trivial. It is anything but. Anyone with even passing technical familiarity with Unix has heard the phrase: “in Unix, everything is a file”. What this means in practical terms is that a program does nothing differently at all to send the sentence “Hello World” to a printer, to the screen, to another program, or to a file on disk for storage because all of these things appear to the program as a file on disk. In fact, in traditional Unix usage, the program would not do any of these things itself. The user (who is also a programmer) would. The user would type the command HelloWorldProgram > lpt1 to direct the “standard output” (called stdout) of the Hello World program to Line Printer 1 or HelloWorldProgram | WordCount to pipe the output of Hello World to a program that would count how many words it received on its “standard input”. By standardizing the way programs exchanged data with anything outside of themselves Unix promoted a way of thinking that was to have a profound effect on the way programs were written. When you use your Facebook account to log into some other website, you are benefiting from the philosophy that Unix pioneered of different programs working together seamlessly. When your phone opens the map program to give you directions, or opens the email program to send an email, it is because Unix taught programmers that “the power of a system comes more from the relationships among programs than from the programs themselves”.

Because of this, Unix programs are often small, doing one thing only, such as tail, which reads in a file (remember: everything is a file) and sends the last 10 lines to its standard output, and it is normal for programs to be written with other programs in mind. For example: the Unix program man, which shows the user manual entries for various programs. The author of man did not bother to write code to break up long manual pages into readable chunks, because according to the philosophy of Unix, the output of man should be piped to another program such as either less or more, which are “paging” programs that break long files (remember: everything is a file) into pages the size of the screen, allowing the user to “turn” the pages by pressing the space bar.

Because of this philosophy, the programmer of man was able to leverage the previous work done by the programmer of less (or more). As you might imagine, this new approach to writing programs had a dramatic effect on productivity.

Smaller programs are easier to write, have fewer bugs, are easier to debug when needed, and are easier to maintain. If you clean your kitchen a little bit every day, it is a lot easier than trying one big cleanup every month. Programming is exactly like that, so writing smaller programs, that rely upon other previously written (and tested and debugged) programs, is more productive than writing big “kitchen sink” programs. Or as I call them: “world domination programs”.

This is a very important idea. It is a central concept to the book I am writing,of which this series is an excerpt. I deeply believe in the Unix philosophy that the best way to approach programming is by writing small program components within a powerful unifying framework, and then linking them together.

There was one more thing…

In 1972 the Unix team created a language to help them write their operating system. The language was called C. It is arguably the most influential programming language ever created. I could also argue that it set productivity back by a couple of decades.

Productivity

The word productivity comes with a lot of baggage. For many years programmer productivity was measured in lines of code per day/week/month. There are several flaws in this method.

Let’s take a small game program called life and have two programmers of different skill levels write it in two languages: C and assembly. In C this is a 57-line program. The assembly language version is 97 lines. Let’s say that the C programmer takes sixty seconds per line (this is actually very fast) and the assembly programmer writes code even faster than the C programmer at the rate of one line every 40 seconds. It will take 65 minutes to create the assembly language version versus 57 minutes for the C version, so it took 15% more time, even though the assembly programmer writes 33% more lines of code per day.

Can we say that the assembly language programmer was more productive? If we measure by lines of code a day, she definitely was. If we measure by time spent to achieve results, no. Things get even less clear when you consider that the two programmers may be making different salaries because a programmer that writes fewer lines might also be paid less and therefore cost less “per line”.

Can we at least compare two programmers making the same salary, working in the same language? Let us consider this case: two programmers write the game of life in C. Captain Slow as we shall call him takes an entire day to write 97 lines of highly optimized code that takes 100 kilobytes of disk and loads in less than a second. Captain Showboat takes the same day, manages to find a way to use 970 lines of code where 97 would do just fine, to write a program that takes up one megabyte of disk and takes 10 seconds to load.

Now tell me: who is the more productive programmer?

More code does not automatically make a program better. Do we really want to incentivize people to write more lines than necessary? Bill Gates famously denigrated measuring productivity by lines of code by calling it a race “to build the world’s heaviest airplane”.³ Later on in the series, I’ll quote Larry Wall on the same basic idea.

Another favorite unit of measure is “function points”, a conceptual unit that is supposed to represent a discrete functionality for the end user. For example, the ability to login would be one function point (FP). The ability to change your profile photo would be another. In the real world, almost nobody counts the function points or has the ability to track how much programmer time was spent on a given FP. Therefore, almost everybody who measures productivity in FP/day “cheats” by using industry standard conversion factors that say: this language typically uses this many lines of code per FP, and that language typically uses that many lines. Then you take the lines of code and divide by the conversion factor, which turns it into function points. Obviously, this is no better than the first method. There are two or three other methods, none of them any better.

Therefore, when I write about productivity in software development, I am appealing to common sense, not metrics. I consider an improvement in productivity to be anything that helps get the software product successfully completed faster or better without costing more.

Of novices and masters

My reason for saying that C could possibly have set productivity back by a few decades is deeply connected to the central thesis of my book that modern programming is artisanal and cannot succeed without master programmers. My absolute favorite programming joke (from the Jargon File, of course) is written in the style of a koan, a riddle intended to help a Zen monk achieve enlightenment.

A novice was trying to fix a broken Lisp machine by turning the power off and on.Knight, seeing what the student was doing, spoke sternly: “You cannot fix a machine by just power-cycling it with no understanding of what is going wrong”.Knight turned the machine off and on.The machine worked.

Tom Knight, one of the Lisp⁴ machine’s principal designers, knew what he was doing when he power-cycled the machine, which is why it worked for him and not for the novice, who had “no understanding of what is going wrong”.

C is like that; it is almost assembly language. It is powerful, and it is dangerous. It is magic. In the hands of a master programmer, the proper incantations in C can be used to write an operating system, another language, or operate devices that our very lives depend upon such as the anti-lock brakes on your car. In the hands of people not quite sure of what they are doing, the magic spell could destroy a piece of hardware, wipe out data, or leave a subtle bug that will not be discovered for a long time and will be almost impossible to find once the undesired side effects are noticed.

C brought programming back to the days of Mel (see the previous chapter in this series), whose brilliant trickery would have been impossible in FORTRAN, COBOL, or ALGOL. It would also be impossible in the other new languages becoming available at the time; BASIC, Pascal, Forth, and SmallTalk. Yet in C you have only to point to a memory address and away you go. Just like Mel.

And here’s the thing:

If every programmer were as good as Mel, this kind of programming would be just lovely. We would all be using optimized code that ran super-fast and didn’t make mistakes. But the unfortunate reality is that not every programmer is that good. And it was just as C was released, that things were going to get a lot worse.

Barbarians at the gate

Up until now, because of the cost of computers, there were mostly two kinds of programmers: a) professionals working for government or large corporations, and b) academics, often postgraduates, using programming for research. Both kinds were almost exclusively self-taught, very smart, and almost always with a real affinity or talent for coding.

But now, in the seventies, two important things changed. First, academic institutions were creating curricula specifically for programming, creating new “teaching languages”, and most importantly, undergraduates (and the occasional lucky high schooler) were being given time-sharing accounts specifically for the purpose of learning how to program.

The second thing that changed was the advent of personal computers, first as kits assembled at home, then later as attractively packaged consumer goods that sold for the same price as an old used car. It was going to get a lot easier for those who did have an affinity to teach themselves. The first mass produced computer kit, the Altair 8800, shipped 5,000 units in 1975, its first year of production. In three years (1977–1979) the two top personal computers — the Tandy TRS-80⁵ and the Apple II — sold more than 150,000 units. That is approximately how many computers existed in the entire world at the beginning of the decade. The BASIC language was just about the only software that was included.

It just got a heck of a lot easier to get anywhere near a computer to begin with.

Hundreds of thousands of new programmers were either being taught or were teaching themselves, and they would soon be unleashed into the world, a world that was hungry for computer programs. This would soon dramatically swell the ranks of programmers.

It was no longer so very hard to become a programmer, and programming was becoming known as a promising career that one could train for. Once the object of passion and care, programming had started the journey to becoming a lucrative career that would eventually attract all sorts of people who, with no particular passion or affinity for programming, were only in it for the money.

Robert Martin, of whom I will speak later in this book, is outspoken about an inexplicable shift he has seen in his lifetime. The cohort of programmers was fairly gender balanced in the fifties and sixties, and then very quickly turned into a male dominated field in the seventies.

It occurs to me that a plausible explanation for the disparity could be the shift of programming from being an obscure vocation that only attracted people based on merit and passion, to being an attractive and lucrative career that was becoming as respected as electrical engineering.

When programming was an obscure vocation, there was little competition. I think it is possible that once programming became a lucrative and desirable career, competition was fierce, and in a male dominated society, institutional bias ensured that the specialized education and choice careers went to men.

Of myth and man

The last thing I want to tell you about from the seventies is the publication in 1975 of The Mythical Man-Month. This oft-quoted book by a former IBM manager named Frederick P. Brooks Jr. describes the lessons he learned ten years earlier while managing one of the biggest software development projects to that date, the System/360 operating system.

It is astonishing to me that in re-reading it now, I cannot find a single analysis or observation that is not as pertinent and trenchant today as it was over forty years ago when this book of essays first came out. The Mythical Man-Month was a seminal work. It is impossible to overstate its significance and the impact and influence it has had on the entire computing industry. Although I think no one at the time recognized it, it was the first comprehensive software development lifecycle (SDLC) methodology. Not only did it describe the challenges faced by systems programming, it prescribed (in over 150 pages):

- the phases of SDLC

- the allocation of time for each cycle

- how to estimate

- how to staff

- how to structure the organization

- how to manage the design of the program

- how to plan iteration

- documentation and governance

- communications protocols

- how to measure productivity

- some special concerns around code design

- deliverables and artifacts

- tools and frameworks

- how to debug and plan releases

- how to manage the process

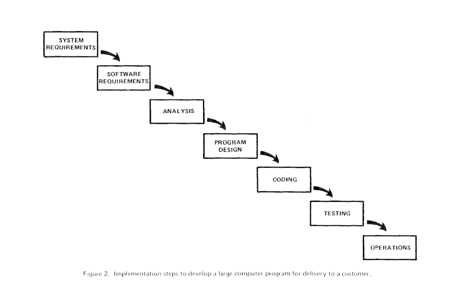

It is true that a version of the step by step path in the figure to the left had appeared in W.W. Royce’s 1970 paper Managing the Development of Large Software Systems but contrary to what many people believe, Royce was not advocating its use. In fact, he issued warnings about using it. He was simply describing common practice of the time and some of the problems associated with it, and as Brooks did five years later, he made some proposals on how to mitigate the issues.

However, Royce’s recommendations filled ten pages, compared to the 150 pages of detailed prescriptions that are in The Mythical Man-Month. Royce’s paper is not so much a methodology as a call to action. That is why I credit Brooks with the first written methodology.

I’ll talk more about methodologies a few chapters from now, but not just yet. There are still a few stories to tell about languages in the eighties.

“Do NOT simply read the instructions in here without understanding what they do.”The configuration file that comes bundled with the Apache webserver<- Previous Chapter

[1] Programming is both an art, as Knuth would have it, and a craft. The two are not mutually exclusive. When talking about creativity it is art. When talking about rigor and mastery it is a craft.

[2] Kernighan, Brian W, and Rob Pike. The UNIX Programming Environment. Englewood Cliffs, N.J., Prentice-Hall, 1984.

[3] “The Physicist”. Wired, 1995, https://www.wired.com/1995/09/myhrvold/.

[4] You will probably remember I mentioned LISP in Chapter One, an influential computer language in use since the late 1950s.

[5] My little brother was one of those people. I, a musician, was interested in his TRS-80 for all of ten minutes one day. If you had told either one of us that day that he would become a professional artist, and I would be a computer geek, we both would have thought you were completely crazy.

This article is an excerpt from my upcoming book The Chaos Factory which explains why most companies and government can’t write software that “just works”, and how it can be fixed.

The winds of change: 1970–1980 was originally published in Hacker Noon on Medium, where people are continuing the conversation by highlighting and responding to this story.

Disclaimer

The views and opinions expressed in this article are solely those of the authors and do not reflect the views of Bitcoin Insider. Every investment and trading move involves risk - this is especially true for cryptocurrencies given their volatility. We strongly advise our readers to conduct their own research when making a decision.