Latest news about Bitcoin and all cryptocurrencies. Your daily crypto news habit.

Server Side Concurrency Side Effects. Multiple authors.

Server Side Concurrency Side Effects. Multiple authors.

In the beginning there was a NodeJS. And it was adaptation of “client side” language, now capable to work on server. And it was good.

Then the paths of server-side JavaScript and its client-side brother drifted apart, and they met again years and years after.

Nowadays you could run client-side code on server and code, created for server-side, on client. Or use the same code for client and server. That’s why it’s so cool!

But this story will tell you how to distinguish JavaScrips again.

Server Side Rendering

What does define “Modern JavaScript”? React, Angular, Vue. And SSR not needed for everyone, and thus forgotten. Modern JS was created for SPAs – something “full” by its own, something without server, sometimes even without “web”.

People didn’t need Server Side Rending, or continued to use “old” solutions – PHP, C#, or Java based backends. Or express, but only as an API layer.

Nobody gave a crap about SSR. I would say – before React 16 it was not a thing, as long as was a quite slow (and did not support streams).

As result – modern JavaScript, the thing we build for the browser, is not “ready”, for servers. Let’s face the truth:

- SSR is hard, it’s flaky and buggy, as long as some libraries “leaves” something behind, something leftover after their work - their side effects, as long these libraries were build for client side, not server rendering in mind.

- “window” is required. And some libraries stores something very important in window, document, local scope, anywhere. Styles in <head/> from styled-component for example. Any “global” object is a killer. And “modern” SSR forces you to have a fake window, or even document, as globals — libraries will try use them, or fall. And usually that would be some major libraries in your application.

- And one more, bonus, thing – client and server should be different, or be the same. I mean absolutely different, working differently, serving different needs, or be the same, generated by the same webpack bundle to “fit” perfectly each other. You know – React hydrate expects that perfect match.

Let’s face the truth – JavaScript, not as a language, but as ecosystem, is not very stateless. There was no need for it, as long as any “garbage” would be cleaned on tab close or page change. Statelessness was something good to have, but not mandatory, as a result SSR, powered by side-effect-ish solutions, could lead, if not to some severe memory leaks, then to the private data leakage due to memoization strategies not compatible with concurrent usage by different server renderers.

On client side there is only one render in one tab for one client – on server you could process 100500 renders simultaneously.

Client side is like a home theatre – cool, but created for your personal usage. Server side is more like real theatre – big screen, people eating popcorn, rubbish, video pirates, absolutely another level of equipment.

Concurrency is not a big thing for synchronous renderToString, but for renderToStream, or “full” render, also very popular on server side – its a killer. Murderer.

Issue 1 — Concurrency

At sever side you may have 100 different client requests, processed simultaneously, by one, a single one, nodejs process. You will call the same function, why not a selector from database, for different renders/clients one micro task after another.

Some libraries, like Reselect, for example, or memoize-one, would not work in such conditions by design – as long as they were designed to in ONE point in time hold only ONE result, for only ONE client, as long this is what “real”(executed in real browser) application needs — to be used by a single client from a single device.

But failed memoization is just a speed problem. Not a big problem – you will have to pay a bit more for servers, and nothing more. Non failed memoization is a real killer – different renders could leak one into another, causing very serious issues – like exposing information of one client to another. As long as, yet again, most of memoization libraries were created for a single-client environment. Exposing private information usually is not welcomed, and could lead to “company ending”. And yes – this case would be much, much more expensive.

Keep in mind – JS is not thread safe language. Any Promise, any await, would give another microtask chance to execute. That microtask could prepare data for another client, and then execution would return to the original task – but it would be now “dirty”. It could render data it shouldn’t. That’s unfortunately.

Even if this is a theoretical(sure) situation, and your code is not affected(sure!), and you double checked, even “tested” it(for sure!) – many companies decided to use different nodejs processes to render different pages, or better say – use one process per one render, as “real browsers do”. Or one puppeteer “tab” per one render, and next trash it to prevent any possible data/memory leaks.

And yes – some companies use puppeteer to do a “server” render, as long their application constructed to work only from “client prospective” – to use browser API, to be a browser-first citizen, to make a fetch request to their backend, to the “different” backend, providing only API layer, and, probably, written not in JavaScript. Or just not producing HTML.

Issue 2 — Strange ways to render stuff

First of all – this approach with puppeteer is working, and is working great. It would “SSR” any application you can imagine, if only that application could work in browser. And, for sure – it could.

Secondary – this approach is not working, as long as it requires much more computations have to be made – it’s 10 times more expensive from CPU(or AWS bill) point of view. Also it does not work very well as long as there is no “definition of done” – puppeteer have no idea when rendering will be completed, and usually configured to wait at least 500ms after the last network request (networkidle0 mode) – very “safe”, but not very performant mode.

XXms to create a new page, plus XXXms to render app, PLUS 500ms to catch “we are done” event. And a few more ms to cleanup.In PHP you may render everything in 40ms. 300 in the worst case. Is JS(React?) SSR is a good thing? Is it doing it right? Is it doing it?

Let’s be honest — modern JavaScript ecosystem for SSR is just sick:

- It is slow, by design.

- It requires a lot of CPU and memory.

- Data could leak between renders.

- Rendering process repeats client side way to get shit done, while some “client side” things are not welcomed on server.

The reason behind all of this is simple, and single – there are differences between client and server, and libraries, born for SPAs, were born for Applications, not for Servers.

“Frontend” libraries were not designed for server environment, – captain Obvious.

And server libraries were not designed for client side, the same captain. But the main problem — We have to use client-side libraries. We have to use libraries not designed to work “forever” on server, as long we are rendering the same components, we would render in browser to present to a customer.

In the same time we might use “server” libraries, like crypto or even moment.js, not designed to be “compact”, on client, and bundle size will grow and flourish.

Have you heard that old joke about gorilla/banana problem? Already jungles around!Every “side” has its own constrains. Bundle size, not playing important role for server, is super crucial for client. “Speed” is important for client, but is even more important for server, as long as it has to process balizion requests per second. Or overheat and die.

How to solve SSR

So – it’s clear, that everything is broken. Let’s fix it now.

As a good example let’s refer to nextjs documentation, which refers itself to an article about one-page, 40 JS lines long app, with compilation time about 1 minute, and a few megabytes in result bundle. Due to some server side stuff got accidentally bundled.

Issue 3 — Client versus Server

What was a problem? There was a life cycle method with different code branches for a client and server. Webpack had no idea which one would be used, and “bundle” both ways. Webpack did it’s job right. But “server side” dependency — “faker”, was HUGE, as long as meant to be used only on server. But never, _never_, on client. As a result – something went wrong.

What was proposed solutions?

- Use “fake” require. Just hide require from webpack, thus don’t let it bundle faker(that server side module).

- Use a special plugin to remove this library from a bundle, after it is being bundled.

- Mark faker as external library, so webpack will not bundle it, as long as you said that you will do it.

All proposed solutions works. All are wrong. All are super wrong, and the problem existed just because the code is wrong. This is that rare case, when you should obey general computer science rules. So, what is the mistake?

So yes – the “real” mistake is using some tricky shortcuts, which worked just by an accident.Mistake 1 — dead code elimination

There is no “dead code elimination” in example and solutions. Code for server and code for client exists simultaneusly. The fork is based on function argument, as result both branches would exists and be valid on build time.

The right way to handle this is to introduce an environment variable, which will control the fork. Everybody is doing this with process.env.NODE_ENV === ‘production’ or ‘development’, and you shall do the same for Server/Client switches.

Now it is obvious for webpack which branch would be never executed, and it will “dead code eliminate” it. I hope this is also obvious for you.

Mistake 2 — business logic in the component.

You better ask – “Why that code, we just eliminated, even existed?”. Where was “Separation of concerns”?! I am begging you – If you need to “DO” something — just call an API method from the underlaying “API Layer” you shall have as a part of “tiered” architecture, and “DO” it.

Just extract everything extractable to another module, making this one more clean. Separate things, like Redux does.

Let’s try to write code for this idea —

Short, sound, compact. Not giving a shit about implementation details. Just doing its job.

How getCustomerName method could look like?

- Probably you could just copy paste the old code inside?

- Use the environment variable to control code for client and code for a server.

- And think, think hard about the reason behind this refactoring. Does this action — just moving code from one place to another – solved anything?

This approach seems to be wrong — it does not solve anything, just made code more entangled. Just created two files from one. This is not a solution!!!!

Could you recall any example when scattering the code among directories solves something? Anything?body[data-twttr-rendered="true"] {background-color: transparent;}.twitter-tweet {margin: auto !important;}

Separating concerns by files is as effective as separating school friendships by desks. Concerns are "separated" when there is no coupling: changing A wouldn't break B. Increasing the distance without addressing the coupling only makes it easier to add bugs.

function notifyResize(height) {height = height ? height : document.documentElement.offsetHeight; var resized = false; if (window.donkey && donkey.resize) {donkey.resize(height); resized = true;}if (parent && parent._resizeIframe) {var obj = {iframe: window.frameElement, height: height}; parent._resizeIframe(obj); resized = true;}if (window.location && window.location.hash === "#amp=1" && window.parent && window.parent.postMessage) {window.parent.postMessage({sentinel: "amp", type: "embed-size", height: height}, "*");}if (window.webkit && window.webkit.messageHandlers && window.webkit.messageHandlers.resize) {window.webkit.messageHandlers.resize.postMessage(height); resized = true;}return resized;}twttr.events.bind('rendered', function (event) {notifyResize();}); twttr.events.bind('resize', function (event) {notifyResize();});if (parent && parent._resizeIframe) {var maxWidth = parseInt(window.frameElement.getAttribute("width")); if ( 500 < maxWidth) {window.frameElement.setAttribute("width", "500");}}

And about “separating like Redux”? That Resucks over-separated and over-boilerplated algebraic effects based code is something everyone hates. Or seeing reasons for. Verbose and booooring.

Well, we’ll see. :pockerface:

Solution 1 — Webpack Aliases

I like webpack aliases. They let you use not relative paths, but absolute paths. Just explain to webpack, that api is src/common/api — and it would work. I hope you did it million times. Have you ever though about how it works?

If you may configure api to be src/common/api, then, well, could you configure it be something… different?

You may use webpack resolve aliases to replace ANY path by ANY path. It does not bound to “alias” and “absolute path” — it just let you control module resolution.

And you may resolve api/customer to the different files on client and a server sides.That is actually is a basic thing for dependency mocking. You knew it.

Just moving stuff to another file gives you control over that stuff. You can override it. So – scattering the code solves something!

Dependency mocking could have helped you to distinguish client and server. Just a better code structure will give you more “mockable” code, and would save the day.

You may have the same “front” code, baked by different “back” code, fitting the current environment. Fitting perfectly.

Dependency mocking is even powering up Preact-compat! How Preact and Preact-compat could “transparently” replace React? — Easy! Via the same webpack resolve alias. Yep — this is from official Preact docs.

Mocking is AWESOME! And separating concerns by files is a thing.

Solution 2 — breaking singletons and side effects

So — I’ve written some scary stories about side-effects, memoization and other stuff. I recon — dependency mocking would save and this day!

Let’s recall “Singletone” problems:

- Reselect works only with “one” client

- Cross-render memoization data leakage

We know how problem looks alike. How could possible solution look like. I mean – what we want as a result?

Probably – if you will able to create a brand new component, isolated from others, and without any “history” – it would be enough to solve all the problems.

It is possible to achieve our goal by:

- Creation of a new nodejs process, or worker. This works, but slow and too “isolated”, ie could not get any benefits from nodejs async nature, as long current task is the only task(but that’s a goal, right?)

- Creating a new “VM”(sandbox) – the same, and even worse – sharing the same thread for all instances. The only benefit– faster startup time.

- Run everything from the scratch, as Jest does. It’s quite cool that tests in Jest are not affecting each other. The question here – how Jest does it.

Jest is not only running tests in parallel, but also runs them in isolation from each other. Side effect from one test will not affect another. Singleton, created in one test, would be undefined for another.

Probably we need the same magic.

But, there is no magic. Let me just ask you — have you ever used Proxyquire? Or mockery, or rewire, or even webpack Hot Module Replacement?

They all utilises “modules API”, and yes – modules, dependencies, and so on – have a real API at your beck and call.

In short – Jest, proxyquire or mockery just wipes module cache, and then controll the way you will require modules again. And once you will require any module again – you will get a brand new version of it, executed from a scratch, free from old values, caches and crap like that. Or mock, as long as the main idea of dependency mocking is to return not the real module, but something you asked to return instead.

Kill it with fire!

Let’s imagine you have some module, which uses memoization, you cannot rely on. It just stores some stuff inside it. Like hello message in the current language. Of course current language never changes 😁

Wipe and Import. Wipe again and import again!

Every time you will generate a new version of component, like you have just directory with similar files, with “scoped” memoization inside each.

Kill them with fire!

Let’s imagine you have a dependency, you could not rely on, and you have to make the same trick with it, and be free from side effects.

This is a bit harder, as long you could not just wipe it – you will also have to wipe every module which used it, and every module which used these modules, and repeat the cycle again and again. You have to re-require everything from ground up, and be able to replace a deeply nested module.

Thankfully there is a package, which could do this job for you, and not only for NodeJS, but also for Webpack*.

There is even a simpler solution – use dependency mocking library, such rewiremock, to handle everything for you:

As far as I know – only rewiremock will not wipe literally everything from a cache, and thus actually usable for this case.

So – dependency mocking, and wiping caches as reason why mocking libraries even works – could break singletons, and isolate side effects inside modules.

So what?

But there is an unanswered question – why this is better that “real” process isolation.

- First – it is faster. You do to have to spin up a new process or even stack.

- Second – it is faster. You may keep, not wipe, almost all node_modules, thus spend less time re-evaluating js code again and again.

- Third – it is just “partially” “isolated”. You still can share some data with another “threads”. That could be something you don’t actually need, but few things, like persistent connection to database, is always good to have. Or able to have a local cache, like JSS cache in MUI, speeding up their SSR 10 times.

And don’t forget – you may not only “wipe” dependency, but also “mock” dependency. You may replace Reselect with Redis/memcache-based persistent cross thread and cross server cache. You could do it!

But don’t forget – the absence of perfect isolation – is the reason why renders could leak to each other. It is still a single vm, a single thread, and if any module would require any other module, out of “mocking cycle” – it may get data(the required module itself) from another render.

That’s why it is important to:

- Clean cache not only before requesting, but also after, to not let other “threads” access your scope.(mocking libraries are doing this as well)

- In case of code splitting – use earlier synchronous resolution on server side, to import everything in one mocking sequence. Not all “loaders” supports it – it is not “frontend” technique. Actually only react-imported-component will import deferred code synchronously on server side – “normal” libraries run import only on component usage.

It’s still super easy to ruin everything. To many packages were created and used without SSR in mind. 💩

The last part – the global “window” object

That’s the last daemon to fight – “client side libraries likes to use window, and having window as a global object is something client side libraries expect from you”. Even if “you” is a server side.

It is so sad, that window is a global object. A field of global magically available to any consumer. The only way to “mock” global variable – create a new vm stack. It’s how globals in node js, or in brower works — THEY ARE GLOBAL!

Oh, if only window would be a dependency. If only…

If only not to import window from 'give-me-a-window'!!!!

Then we could just use the same dependency mocking techniques to inject the window we need. Inside magic “window” module we could return a real browser window, a jsdom mock, or some fake, good enough for us. We could ship an unique window per client, or safely mock something like.. timers

The same solution could work for document, or any other global variable. Sometimes it’s so cool to have a global variable – like express response or database connection – it’s just so painful to drill these props down, while there is nothing like React.Context.

And it would be sooooo cool, to HAVE this per-instance, per-connection “global” variables at your beck and call. If only they were imported from a modules, we are able to control.

And, you know, it’s not a big thing to do – webpack, or “nodejs” loader could just inject one line of code, into the every file, and provide that “window”, we could fully control.

For Webpack you could use standard ProvidePlugin to inject not jQuery, but any “global” variable you need. For Nodejs its better to use Pirates — the library babel.js uses to “apply” es6-es5 transplation.

Then — window and document, database connection or express response would be a local variables, and you will be able to control their values via dependency mocking. You will do that sort of DI, you thought possible only in SprintBoot Java applications.

This is working, as long dependency mocking is working. And it is working, and working well inside your tests, every time you jest.mock or proxyqure.load stuff. Tested by tests themselves. And free from side-effects.

It’s a Win!

So — separation of concerns, in terms of just moving stuff from one file to another, would enable dependency mocking. And dependency mocking would enable other things, you never thought about.

Dependency mocking is not a smell, and is not only for testing stuff. No – it’s just a powerful feature you may use.

Think about modules as about micro-services, and mocking stuff – is your docker-compose.

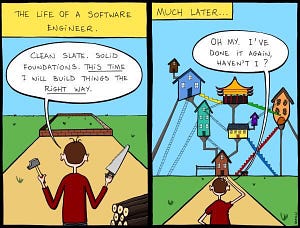

And on this picture you may see a quite good approach. It is just something you need….. :)

So — how?

- To conditionally import client or server side API use:

- for webpack use resolve.alias

- for nodejs use require-control

PS: conditional imports is the fastest option possible. Please prefer it

2. To wipe some modules from a cache:

- for webpack and nodejs use wipe-{node|webpack}-cache. They have a same interface, and quite simple to use

PS: sometimes wiping everything could be a better option. Using rewiremock to wipe the cache is the simples solution, but not as performant as using wipe-node-cache directly (yet).

3. To inject globals as locals

- for webpack use ProvidePlugin

- for nodejs use Pirates

SSR: Dependency mocking is the answer! was originally published in Hacker Noon on Medium, where people are continuing the conversation by highlighting and responding to this story.

Disclaimer

The views and opinions expressed in this article are solely those of the authors and do not reflect the views of Bitcoin Insider. Every investment and trading move involves risk - this is especially true for cryptocurrencies given their volatility. We strongly advise our readers to conduct their own research when making a decision.