Latest news about Bitcoin and all cryptocurrencies. Your daily crypto news habit.

image by breakingpic on pexels.com

image by breakingpic on pexels.comThe Problem

Throughout my career, I had to implement mechanisms for scheduling ad-hoc tasks a number of times. Often, they are part of a much bigger system.

For instance:

- A tournament system for games would need to execute business logic when the tournament starts and finishes.

- An event system (think eventbrite.com or meetup.com) would need a mechanism to send out timely reminders to attendees.

- A to-do tracker (think wunderlist) would need a mechanism to send out reminders when a to-do task is due.

The TL;DR is that, I need a way to execute a piece of code at a specified point in time in the future. It’s possible to do this in just about every programming language. For example, .Net has the Timer class and JavaScript has the setInterval function. But I find myself wanting a service abstraction to work with instead. Sadly, AWS does not offer a service for this type of workloads. CloudWatch Events is the closest thing, but not quite.

What is CloudWatch Events?

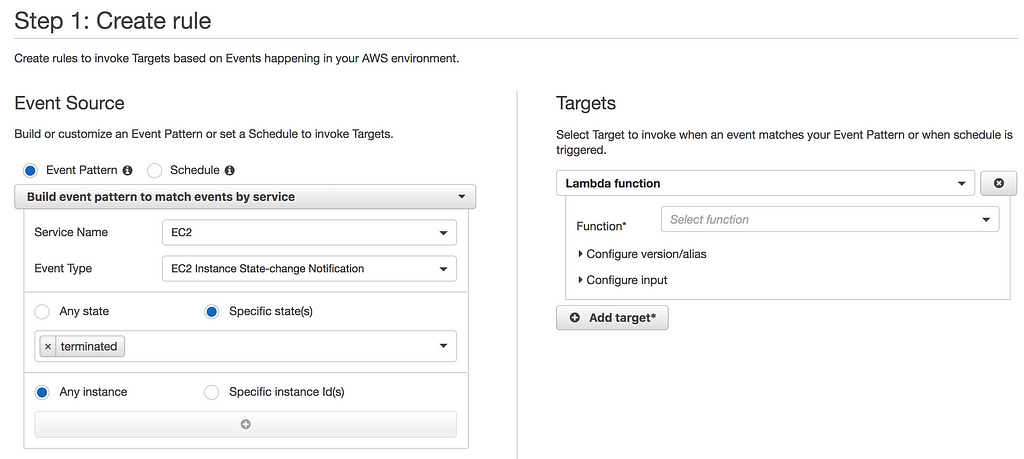

CloudWatch Events delivers a near real-time stream of system events that describe changes in AWS resources. EC2 instance terminated, ECS task started, Lambda function created, etc.

To react to these system events, you can subscribe a Lambda function to an Event Pattern. Whenever an event is matched, CloudWatch Events would invoke the target Lambda function on your behalf.

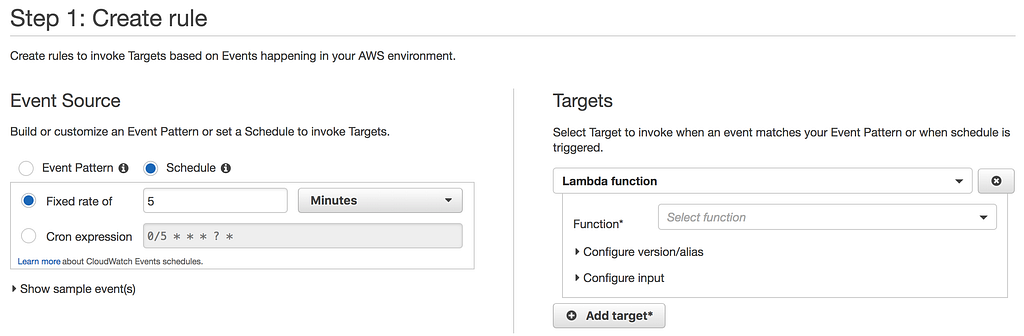

In addition, CloudWatch Events also lets you create cron jobs easily.

This lets you invoke a Lambda function based on a fix rate (down to every minute). Or, you can specify a custom schedule using a cron expression.

The problem with CloudWatch Events as a scheduling mechanism

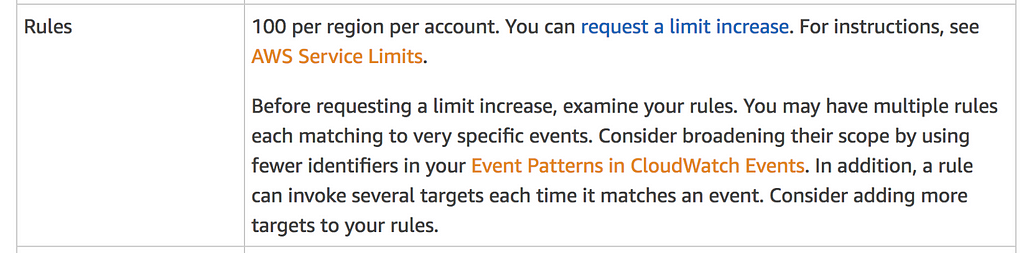

However, CloudWatch Events is not designed for running lots of ad-hoc tasks, each to be executed once, at a specific time.

The default limit on CloudWatch Events is a lowly 100 rules per region per account. It’s a soft limit, so it’s possible to request a limit increase. But the low initial limit suggests it’s not designed for use cases where you need to schedule millions of ad-hoc tasks.

CloudWatch Events is designed for executing recurring tasks.

Because there are no other suitable AWS services, I had to implement a scheduling service myself a few times in my career. I experimented with a number of different approaches:

- cron job (with CloudWatch Events)

- wrapping the .Net Timer class as an HTTP endpoint

- using SQS Visibility Timeout to hide tasks until they’re due

Lately, I have seen a number folks use DynamoDB Time-To-Live (TTL) to implement these ad-hoc tasks. In this post, we will take a look at this approach and see where it might be applicable for you.

How do we measure the approach?

For this type of ad-hoc tasks, we normally care about:

- Precision: how close to my scheduled time is the task executed? The closer the better.

- Scale (number of open tasks): can the solution scale to support many open tasks. I.e. tasks that are scheduled but not yet executed.

- Scale (hotspots): can the solution scale to execute many tasks around the same time? E.g. millions of people set timer to remind themselves to watch the superbowl, so all the timers fire within close proximity to kickoff time.

DynamoDB TTL as a scheduling mechanism

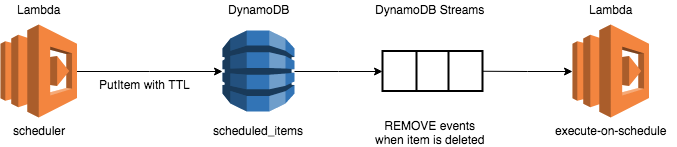

From a high level this approach looks like this:

- A scheduled_items DynamoDB table which holds all the tasks that are scheduled for execution.

- A scheduler function that writes the scheduled task into the scheduled_items table, with the TTL set to the scheduled execution time.

- A execute-on-schedule function that subscribes to the DynamoDB Stream for scheduled_items and react to REMOVE events. These events corresponds to when items have been deleted from the table.

Scalability (number of open tasks)

Since the number of open tasks just translates to the number of items in the scheduled_items table, this approach can scale to millions of open tasks.

DynamoDB can handle large throughputs (thousands of TPS) too. So this approach can also be applied to scenarios where thousands of items are scheduled per second.

Scalability (hotspots)

When many items are deleted at the same time, they are simply queued in the DynamoDB Stream. AWS also autoscales the number of shards in the stream, so as throughput increases the number of shards would go up accordingly.

But, events are processed in sequence. So it can take some time for your function to process the event depending on:

- its position in the stream, and

- how long it takes to process each event.

So, while this approach can scale to support many tasks all expiring at the same time, it cannot guarantee that tasks are executed on time.

Precision

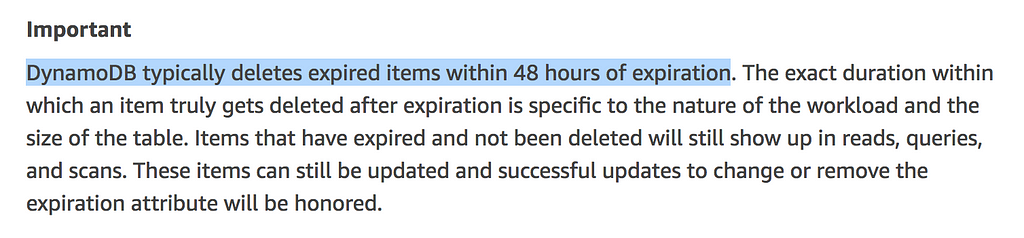

This is the big question about this approach. According to the official documentation, expired items are deleted within 48 hours. That is a huge margin of error!

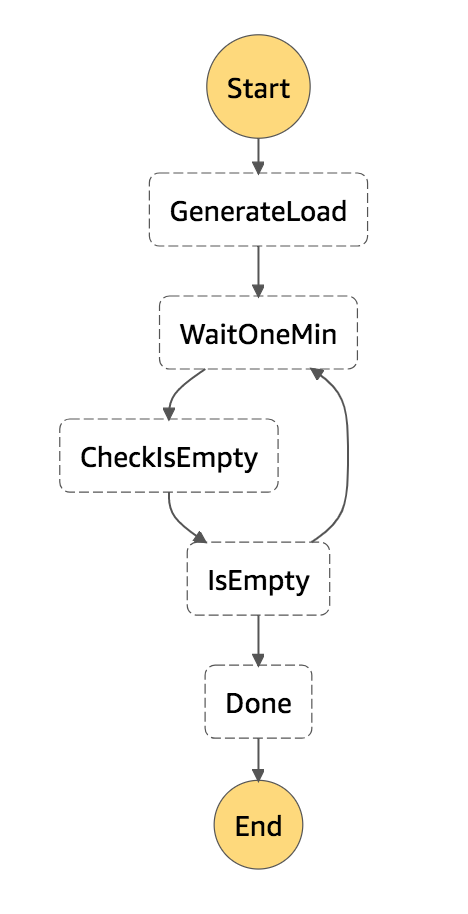

As an experiment, I set up a Step Functions state machine to:

- add a configurable number of items to the scheduled_items table, with TTL expiring between 1 and 10 mins

- track the time the task is scheduled for and when it’s actually picked up by the execute-on-schedule function

- wait for all the items to be deleted

The state machine looks like this:

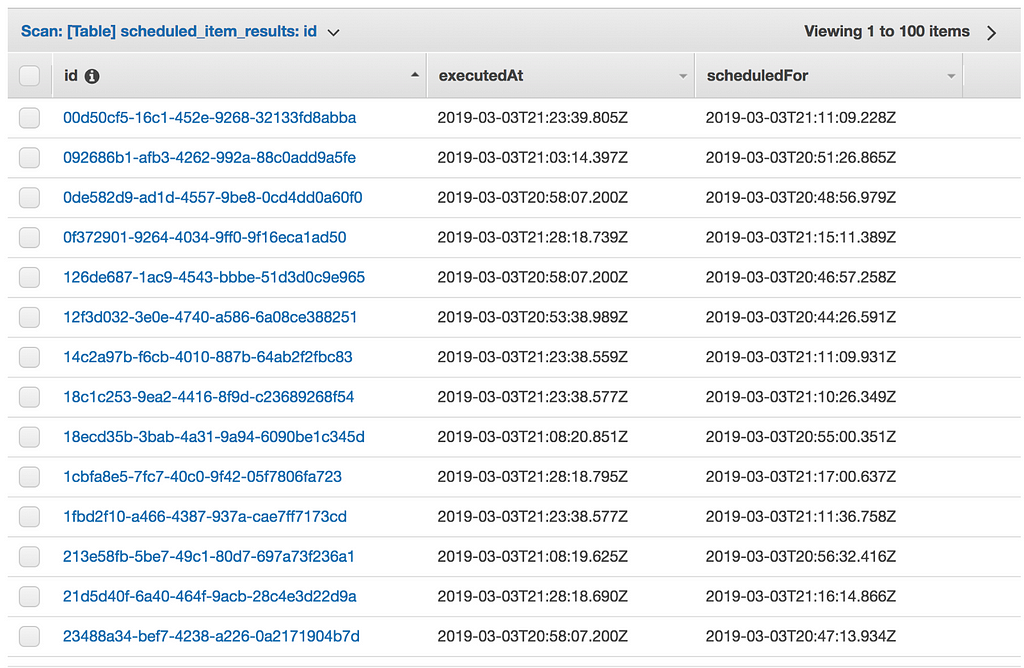

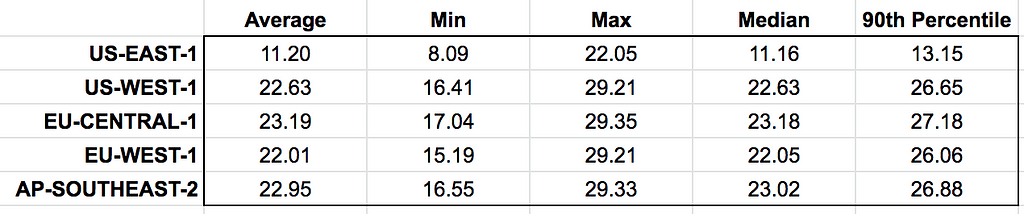

I performed several runs of tests. The results are consistent regardless the number of items in the table. A quick glimpse at the table tells you that, on average, a task is executed over 11 mins AFTER its scheduled time.

I repeated the experiments in several other AWS regions:

I don’t know why there are such marked difference between US-EAST-1 and the other regions. One explanation is that the TTL process requires a bit of time to kick in after a table is created. Since I was developing against the US-EAST-1 region initially, its TTL process has been “warmed” compared to the other regions.

Based on the result of my experiment, it will appear that using DynamoDB TTL as a scheduling mechanism cannot guarantee a reasonable precision.

On the one hand, the approach scales very well. But on the other, the scheduled tasks are executed at least severals minutes behind, which renders it unsuitable for many use cases.

Like what you’re reading, why not check out my video course with Manning or hire me?

In the video course we will cover topics including:

- authentication & authorization with API Gateway & Cognito

- testing & running functions locally

- CI/CD

- log aggregation

- monitoring best practices

- distributed tracing with X-Ray

- tracking correlation IDs

- performance & cost optimization

- error handling

- config management

- canary deployment

- VPC

- security

- leading practices for Lambda, Kinesis, and API Gateway

You can also get 40% off the face price with the code ytcui. Hurry though, this discount is only available while we’re in Manning’s Early Access Program (MEAP).

DynamoDB TTL as an ad-hoc scheduling mechanism was originally published in Hacker Noon on Medium, where people are continuing the conversation by highlighting and responding to this story.

Disclaimer

The views and opinions expressed in this article are solely those of the authors and do not reflect the views of Bitcoin Insider. Every investment and trading move involves risk - this is especially true for cryptocurrencies given their volatility. We strongly advise our readers to conduct their own research when making a decision.